What Is Goldilocks? (Or How to Set Your Kubernetes Resource Requests)

When we open sourced Goldilocks in October 2019, our goal was to provide a dashboard utility that helps you identify a baseline for setting Kubernetes resource requests and limits. We continue to refine Goldilocks, because getting resource requests and limits just right is an ongoing challenge for most organizations.

Why Set Resource Configurations?

You can set two types of resource configurations on each container in a pod: requests and limits. A resource request defines the minimum resources that containers need. A limit defines the maximum amount of resources the container can use. Setting these helps to stop you from over-committing resources, while also protecting your resources for other deployments. Requests impact how pods are scheduled in Kubernetes; the scheduler reads the requests for each container in your pods and then finds the best node that can fit that pod. Kubernetes uses this information to optimize your resource utilization.

Try Fairwinds Insights to get the benefits of Goldilocks at enterprise scale.

See how Insights and Goldilocks compare.

Getting Kubernetes Resource Requests Just Right

We use the Kubernetes Vertical Pod Autoscaler (VPA) in recommendation mode to see a suggestion for resource requests on each of our apps. Goldilocks creates a VPA for each deployment in a namespace and then queries them for information. While VPA can set the resource requests, the dashboard in Goldilocks makes it easy to look at all the recommendations and make decisions based on what you know about your Kubernetes environment. There’s a good post on learnk8s.io about setting the right requests and limits in Kubernetes, with a lot of code, details, and fun analogies.

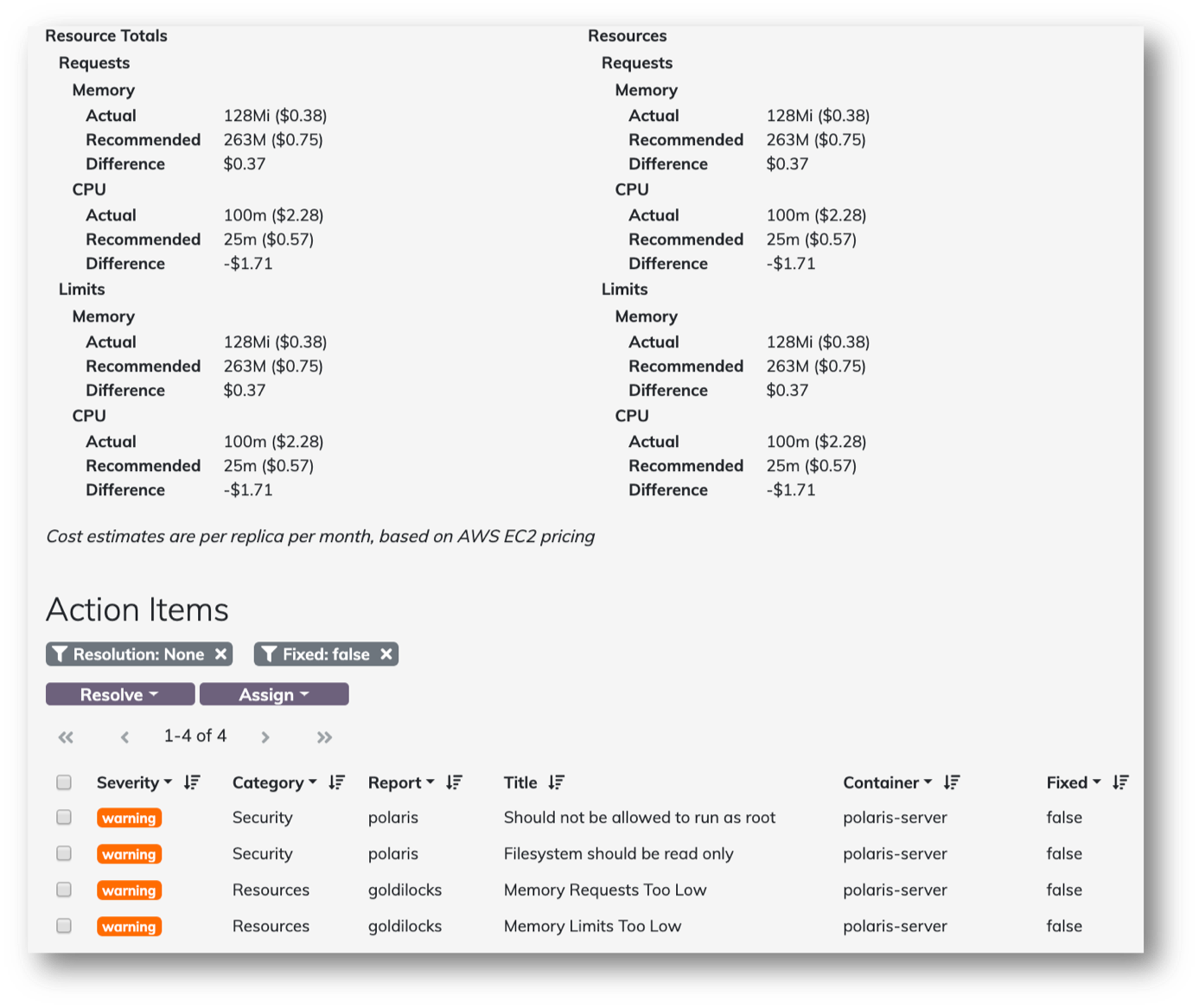

Once your VPAs are in place, this is what the recommendations look like in the Goldilocks dashboard:

How Does Goldilocks Generate Recommendations?

Goldilocks uses the Recommender in the VPA to make recommendations. According to the VPA documentation:

“After starting the binary, recommender reads the history of running pods and their usage from Prometheus into the model. It then runs in a loop and at each step performs the following actions:

-

- update model with recent information on resources (using listers based on watch),

- update model with fresh usage samples from Metrics API,

- compute new recommendation for each VPA,

- put any changed recommendations into the VPA resources.”

The recommendations generated by Goldilocks are based on historical usage of the pod over time, so your decisions are based on more than a single point in time.

Goldilocks shows two types of recommendations in the dashboard. These recommendations are based on Kubernetes Quality of Service (QoS) classes. QoS classes is a Kubernetes concept that determines the order in which pods are scheduled and evicted, and Kubernetes itself assigns the QoS class to pods. There are three types of QoS classes: Guaranteed, Burstable, and BestEffort. Pods that have identical CPU and memory limits and requests are placed into the Guaranteed QoS class. Pods that have CPU or memory requests lower than their limit are given the Burstable QoS class. The BestEffort QoS class is for pods made up of containers that do not have any requests or limits. (We do not recommend doing this at all.)

Using Goldilocks, we generate two different QoS classes of recommendation from the historical data:

- For Guaranteed, we take the target field from the recommendation and set that as both the request and limit for that container. This guarantees that the resources requested by the container will be available to it when it gets scheduled, and generally produces the most stable Kubernetes clusters.

- For Burstable, we set the request as the lowerBound and the limit as the upperBound from the VPA object. The scheduler uses the request to place the pod on a node, but it allows the pod to use more resources up to the limit before it’s killed or throttled. This can be helpful for handling spiky workloads, but if used too much can quickly result in over-provisioning a node and overwhelming it.

How Accurate is Goldilocks?

The Goldilocks open source software is based entirely on the underlying VPA project, specifically the Recommender. In our experience, Goldilocks is a good starting point for setting your resource requests and limits. But every environment is different, and Goldilocks isn’t a replacement for tuning your applications to your specific use cases.

Contributing to Goldilocks

Goldilocks is open source and available on GitHub. We continue to make a lot of changes, improving its ability to handle large clusters with hundreds of namespaces and VPA objects. We’ve also changed how Goldilocks is deployed, so now it includes a VPA sub-chart that you can use to install both the VPA controller and the resources for it. A lot of changes we made with Harrison Katz at SquareSpace, based on invaluable feedback from him and the team there. We want to keep improving our open source projects, and welcome your contributions!

Goldilocks is also part of our Fairwinds Insights platform, which provides multi-cluster visibility into your Kubernetes clusters, so you can configure your applications for scale, reliability, resource efficiency, and security.

You can use Fairwinds Insights for free, forever. Get it here.

Join our open source group - and attend our next meetup on September 23 or December 14!